Quantum computing continues to face a recalcitrant engineering mismatch the field can prove to be able to perform advanced quantum operations, but it has difficulty in achieving sufficient qubits in one, well-organized machine to rendezvous those quantum operations durable. The constraint is hardly imagination; it is scale control.

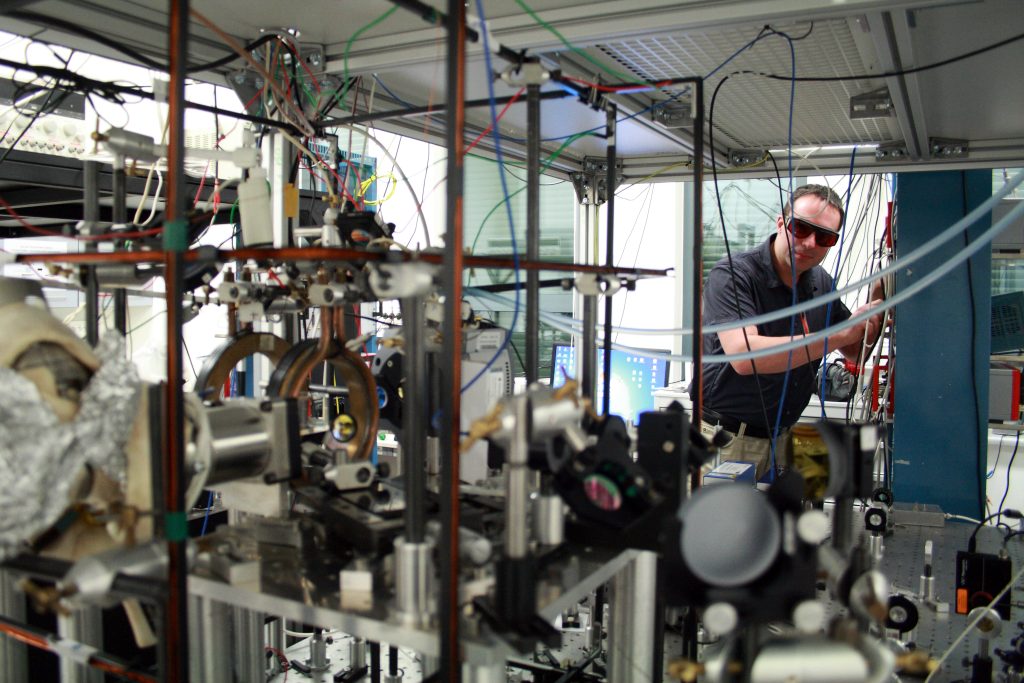

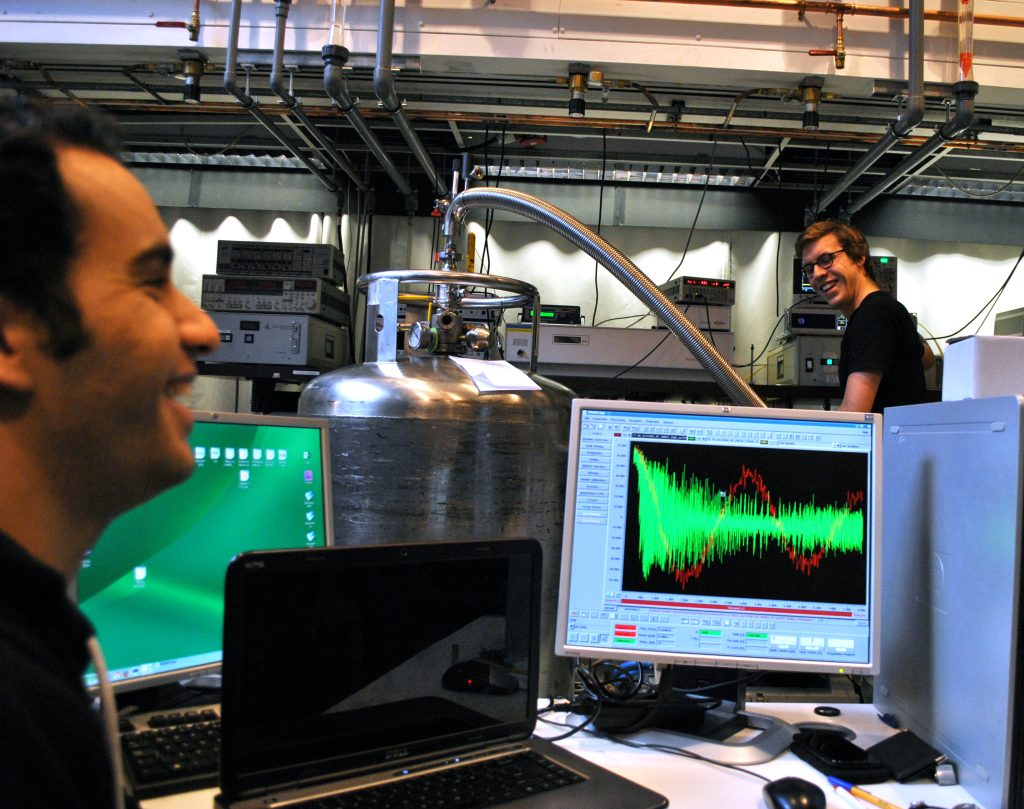

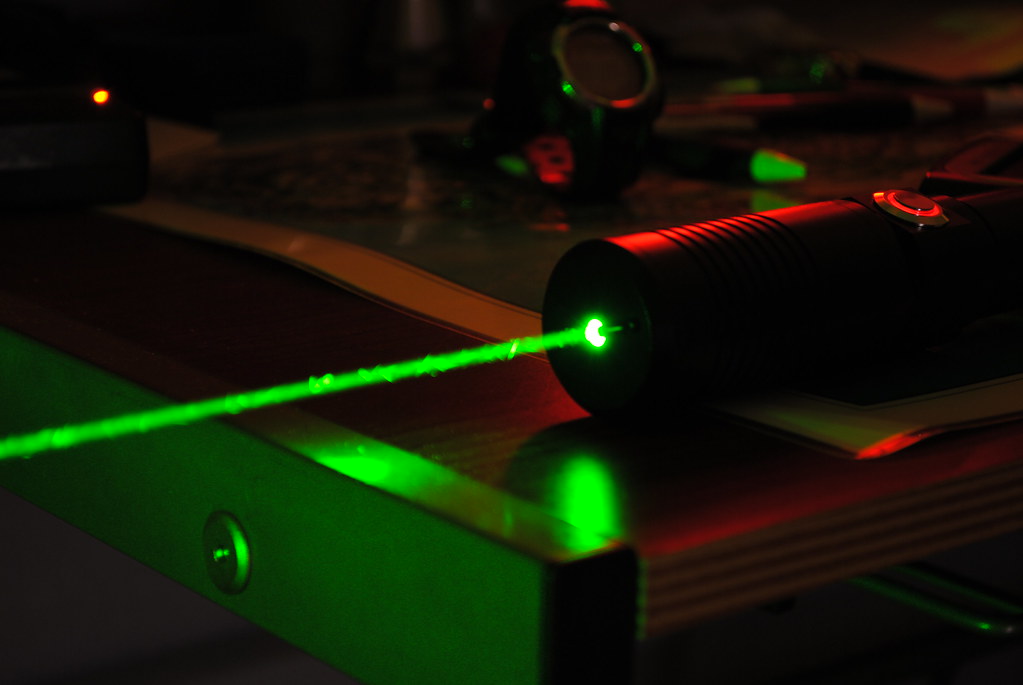

Neutral-atom hardware A recent stream of the hardware redefines that bottleneck as a photonics problem. Rather than asking how to create better qubits, it asks how to project, control, and stabilize large patterns of light that can contain the same atoms in their position, very quietly enough to be useful.

1. The traps of one laser are transformed into thousands of traps in metasurface arrays

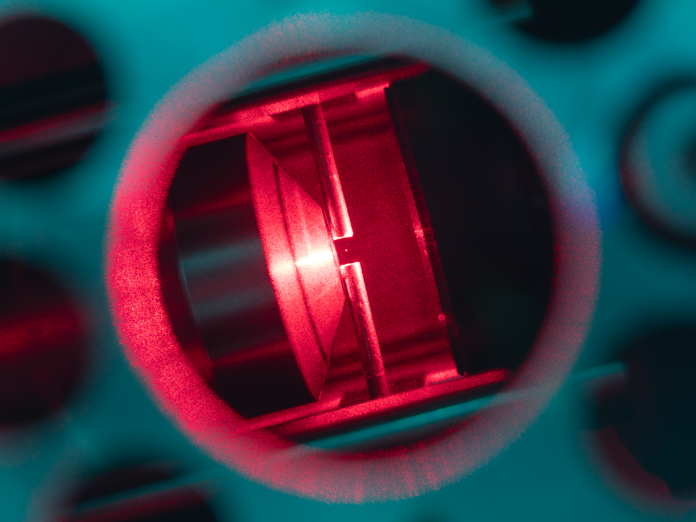

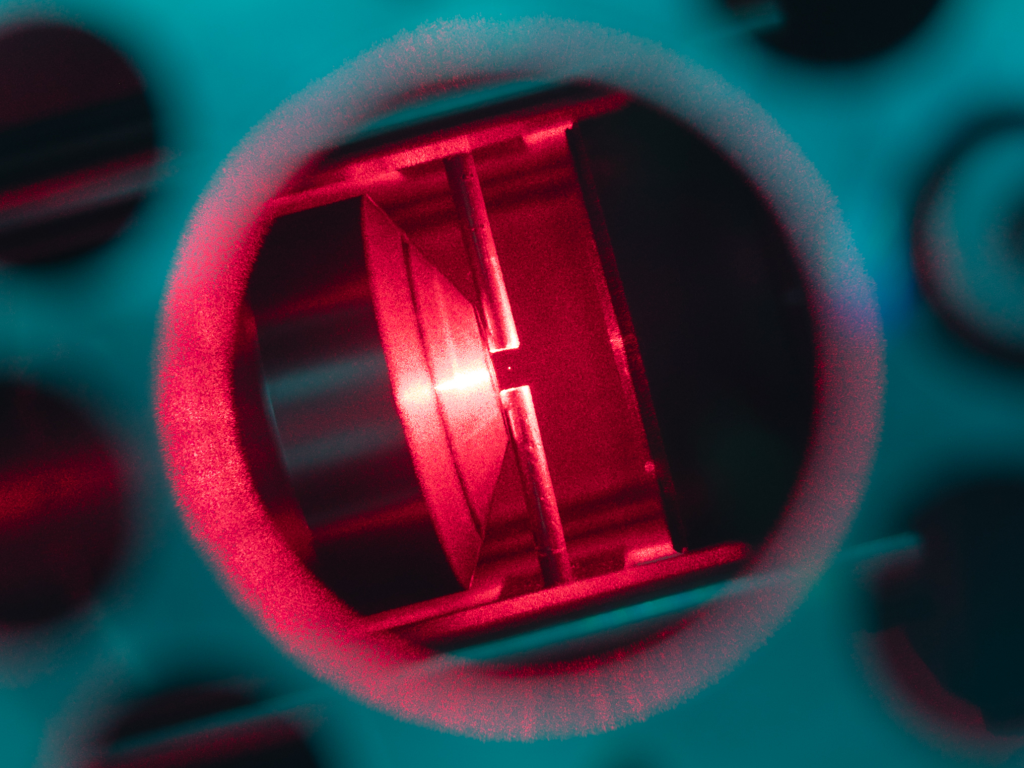

Optical tweezers do not consist of mechanical instruments but the beams with a sharp focus which are able to stabilize single atoms in their focal point. The change is the way that these focal points are changed. Instead of giant beam-shaping stacks, Columbia researchers made metasurfaces: flat optics composed of nanostructured pixels that shape a single incoming beam to a designed field of many traps.

A 1,000 strontium atoms, the reported platform, being a candidate qubit each, was trapped in highly uniform two-dimensional patterns. The engineering promise is not that number but that pattern: the trap pattern is coded in an artificial surface, so scaling is an issue of device size, optical power, and system integration but not multiplication of conventional steering hardware.

2. Why the same atoms distort the scaling discourse

Engineering work can be wasted on many quantum platforms by trying to accommodate minor variations between qubits such as frequency differences, fabrication variance, or drift. The neutral atoms are initially at a different point: every atom of a particular isotope is essentially identical. Such uniformity obviates the characterization of per-qubit as arrays increase, an operational benefit when control systems are already becoming overloaded with thousands of channels.

According to one of the researchers, “Atoms are nature’s own qubits; perfectly identical and massively abundant. The bottleneck has always been finding a way to control them at scale“, said Aaron Holman.

3. The “more qubits” to the “more usable qubits”

The qubits cannot be scaled just by adding more delicate elements to a smaller area. Every additional qubit introduces an element of noise into the system, where the errors propagate. This is the reason why large-scale machines are often modeled in terms of quantum error correction, in which a large number of physical qubits are used to implement a small number of logical qubits.

The metasurface strategy is a strategy that is based on physical layer that error correction relies on: uniform qubit behavior over a broad field. Metasurfaces enable the creation of dense patterns with fewer alignment requirements than conventional modulators by bending light with subwavelength patterns, bridging a gap between laboratory demonstrations and the error-resistance requirements of error-corrected architectures.

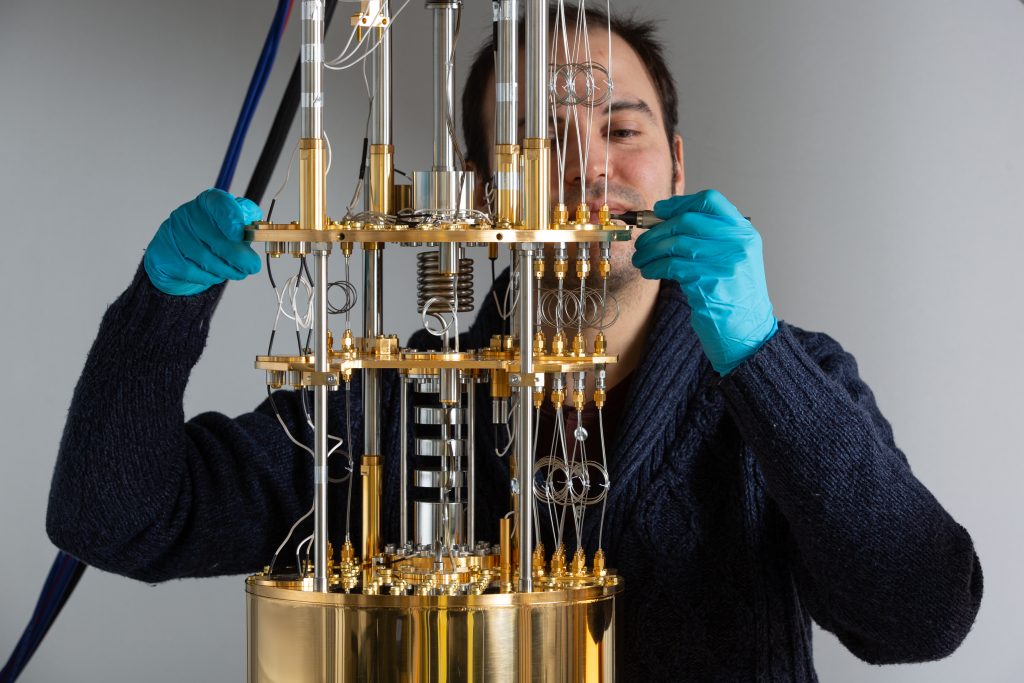

4. The extent of the geometry: 360,000 possible locations

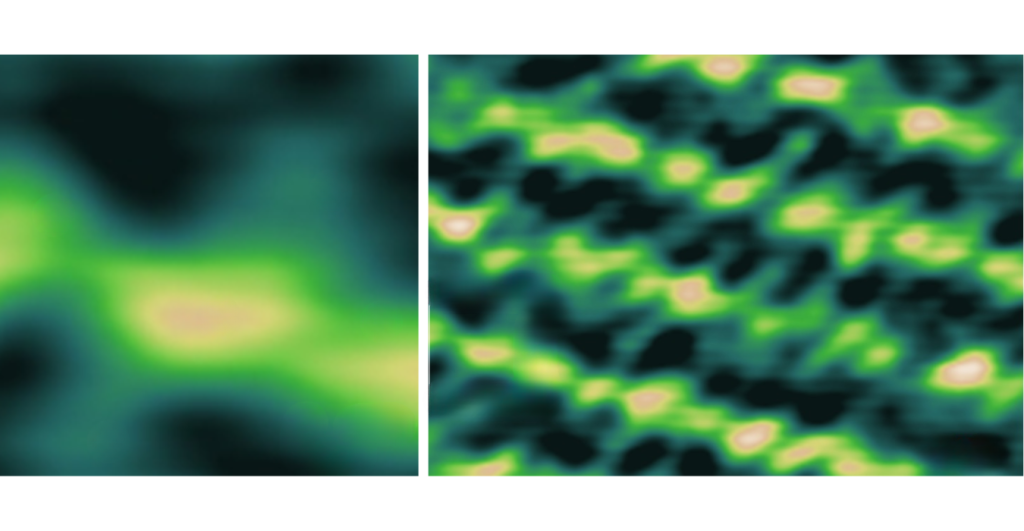

The same work showed a design that was capable of producing significantly larger tweezer designs than are normally enabled by current methods. An over 100 million-pixel metasurface of 3.5 mm diameter yielded a 600 x 600 array, which is 360,000 optical tweezers.

Not every single one of those sites was occupied by atoms in the reported trapping demonstration, however, the photonic capacity is important: it is no longer a matter of how many traps can be made and how many atoms can be loaded today, but where the next limits lie.

5. Optical complexity is not the real limiter but laser power

Traditional large tweezer arrays usually reach a viable limit due to the complexity, sensitivity and inability to scale of steering and shaping systems. The relationship is reversed in metasurfaces. When the pattern is patterned, the growth of the system is less and less controlled by multiplying active components and more by the possibility to use optical power and thermal control.

In the effort during Columbia, scaling between thousands and 100,000+ trapped atoms was presented as a realistic way, provided more powerful lasers are available in large numbers. The metasurfaces themselves were also claimed to be able to sustain optical intensities in excess of 2,000 W/mm 2 a materials-and-thermal point which directly influences whether arrays can be made anywhere dense without compromising the optics.

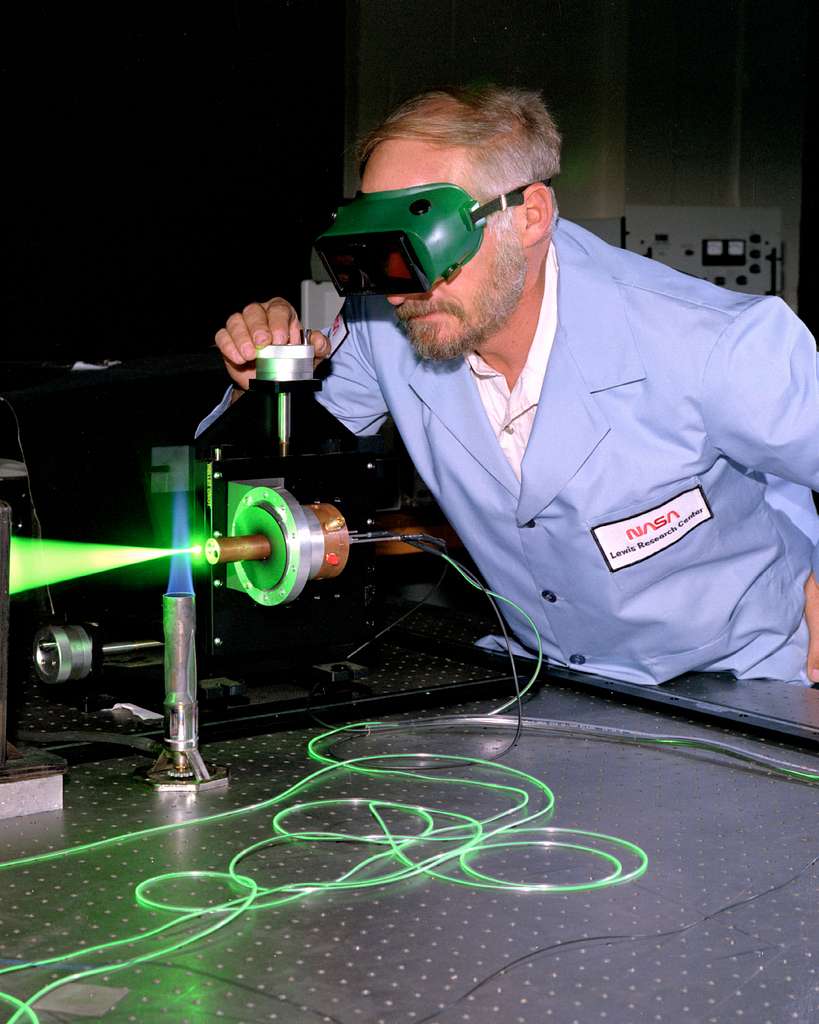

6. Another scaling wall and cavity arrays provide an equivalent path–is referred to as readout

The system problem includes not only trapping and coherent control of atoms but also requires fast parallel readout by large machines. An independent effort, published in Nature, by Stanford researchers reported an optical array of cavities which shapes the photon flow through atoms more effectively with a 40-cavity array and a prototype of 500 or more cavities.

Jon Simon explained that in order to make a quantum computer, then we must be capable of reading the information in the quantum bits at a very high rate. The metasurface tweezers are also consistent with the engineering reasoning: the interface (in this case, collection of light) needs to be parallel and repeatable, not channel-by-channel.

These strategies combine to paint a hardware philosophy of quantum scale: add more structure to fabricated photonics, such that adding qubits is like tiling designed optical functions, and not like growing a forest of carefully-tuned components.

The rest of the challenge is not obscured large arrays still require vacuum systems that are stable, very accurate timing, Lasers with low noise, and control compatible with error correction. However, metasurface tweezer chips and cavity-array readout shift to locations where the most challenging constraints reside, which is typically the first indication of a transition to a larger architecture than can be made in the laboratory.